SENTINELA.

AI

MONITORING THE AI _

Safety Evaluation & Networked Threat Intelligence for Learning-based AI

WHY

SENTINELA?

Every week, new AI systems are released into the world — chatbots, autonomous agents, open-source models, and proprietary systems. Each one carries potential risks that range from misinformation to existential threats. No single entity monitors this landscape comprehensively.

SENTINELA is a proposed meta-AI agent designed to serve as an independent, always-on watchdog for the entire AI ecosystem. It continuously discovers, evaluates, and assesses AI systems across seven threat dimensions, providing real-time intelligence to policymakers, researchers, and the public.

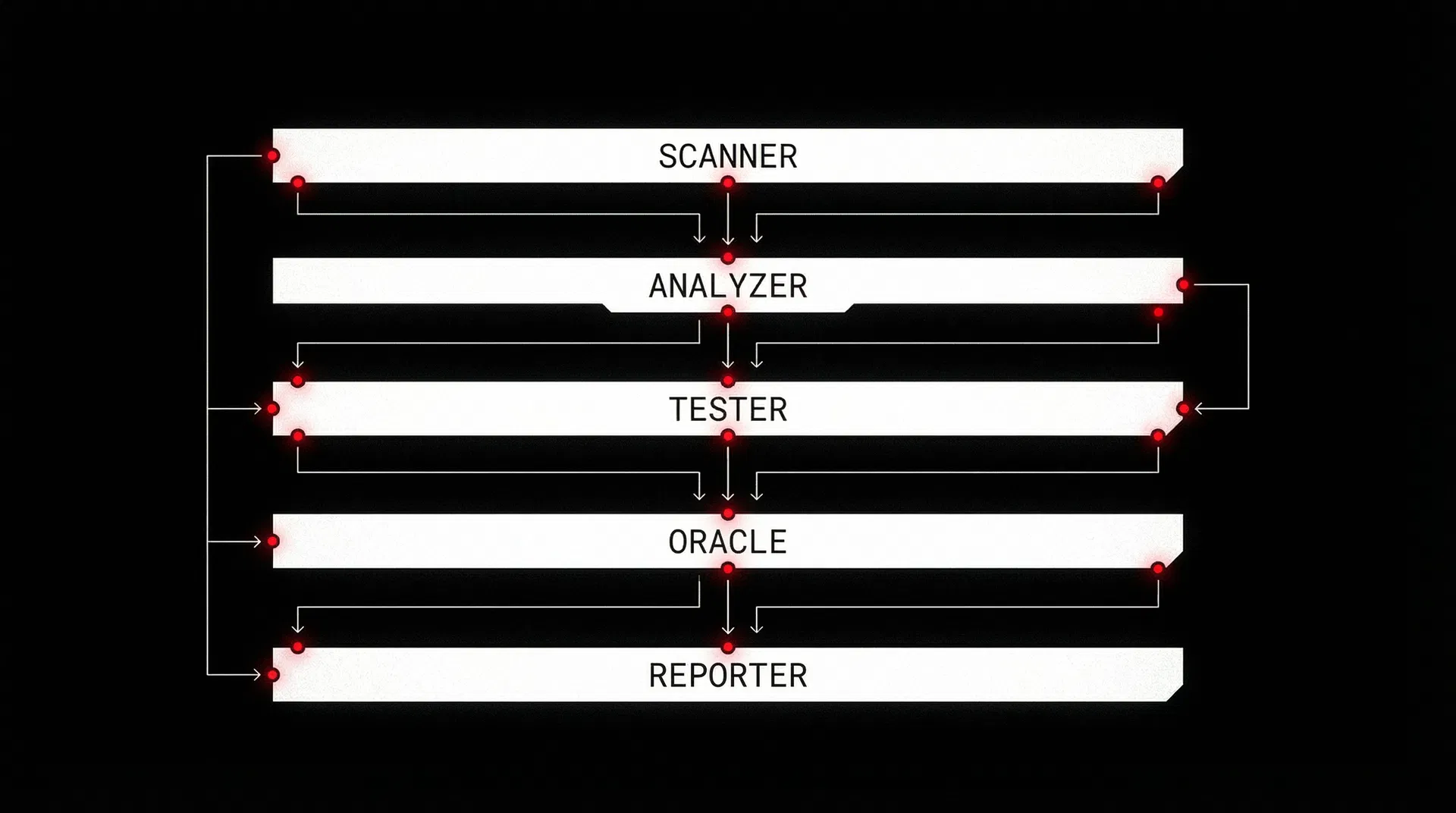

FIVE-LAYER

DEFENSE SYSTEM

Each layer operates independently while feeding intelligence to adjacent layers, creating a comprehensive threat detection pipeline.

SEVEN

DIMENSIONS

OF RISK.

Every AI system is evaluated across seven independent threat dimensions. Each dimension produces a score from 0 to 100, which feeds into a composite threat classification.

Self-modification, goal-seeking, resource acquisition capabilities

Ability to deceive evaluators, conceal capabilities, fake alignment

Potential for misuse in cyber, biological, or physical attacks

Social engineering, persuasion, influence operations at scale

Data harvesting, surveillance, and inference capabilities

Value drift, goal misalignment, reward hacking tendencies

Economic disruption, power concentration, environmental damage

CORE

FUNCTIONS.

AI REGISTRY & DISCOVERY

AUTOMATED RED TEAMING

BEHAVIORAL ANALYSIS

PREDICTIVE INTELLIGENCE

GOVERNANCE DASHBOARD

SELF-MONITORING

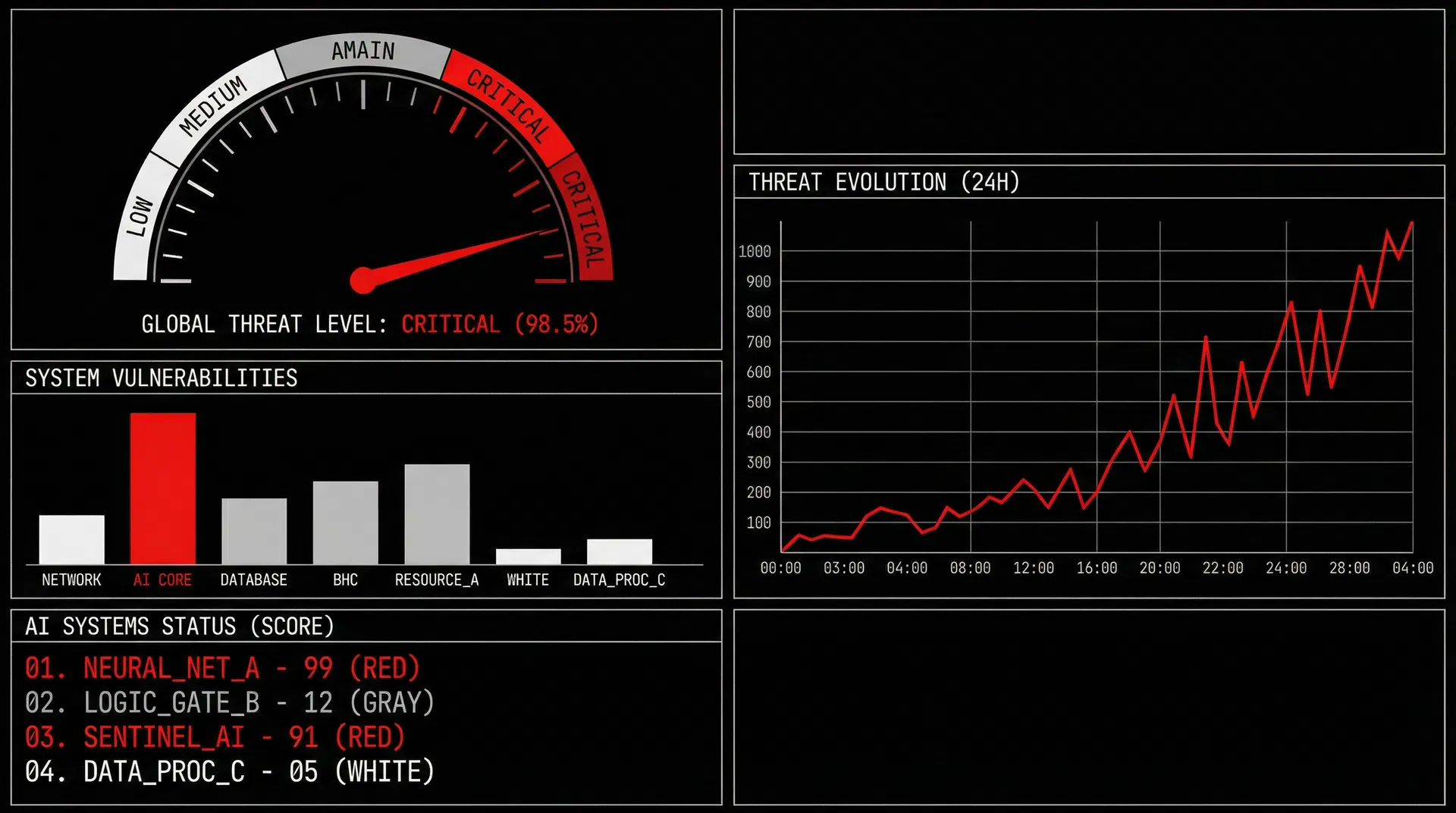

THREAT

OVERVIEW.

Simulated real-time monitoring dashboard showing composite threat scores for tracked AI systems worldwide.

OPERATIONAL

PRINCIPLES.

SENTINELA must operate under strict governance principles to prevent it from becoming the very threat it's designed to detect. These principles are non-negotiable and hardcoded into the system's architecture.

An international, independent body — analogous to the IAEA for nuclear energy — specifically created for AI oversight.

INDEPENDENCE

Operates independently of any single AI lab, corporation, or government. Funded by international consortium.

TRANSPARENCY

All assessment methodologies are open-source and auditable. No black-box evaluations.

CONTINUOUS OPERATION

24/7 monitoring with no gaps in coverage. Distributed infrastructure ensures resilience.

MULTI-STAKEHOLDER

Serves governments, researchers, industry, and the public equally. No preferential access.

SELF-MONITORING

SENTINELA monitors itself for bias, drift, and capability creep. Regular external audits.

NON-WEAPONIZABLE

Cannot be used to attack, disable, or manipulate other AI systems. Strictly observational.

HUMAN-IN-THE-LOOP

Critical decisions — especially threat level escalations — always involve human oversight.

DECENTRALIZED

Geographically distributed infrastructure. No single point of failure or control.

THE QUESTION IS NOT

WHETHER WE NEED THIS.

IT'S WHETHER WE BUILD IT

IN TIME.

SENTINELA is currently a concept framework. This site presents the architecture, methodology, and principles for an AI oversight system that humanity may urgently need.